AI Governance for UK Organisations

AI governance in the UK starts with three operational foundations: an inventory of every AI system your organisation uses, a risk assessment methodology proportionate to your sector, and documented policies that define acceptable use, oversight responsibilities and escalation pathways. This guide covers what governance looks like in practice, what a framework should include, how UK regulation shapes your AI policy, and how to build responsible AI practices that create measurable competitive advantage.

AI governance is the structured set of policies, processes and oversight mechanisms that ensure your organisation deploys AI responsibly and legally. For UK organisations navigating the DSIT pro-innovation approach and the EU AI Act’s extraterritorial reach, a proportionate governance framework is the foundation for compliant, effective AI adoption.

AI governance defines who makes decisions about AI systems, what risks must be assessed before deployment, and how compliance is maintained as both technology and regulation evolve. ISO/IEC 42001:2023 provides the certifiable management system standard, while DSIT's five cross-cutting principles set the regulatory baseline. AI could boost UK GDP by £550 billion by 2035 (opens in a new tab) (PwC Economic Services), but only if organisations deploy it responsibly.

What Is AI Governance and Why Do UK Organisations Need It?

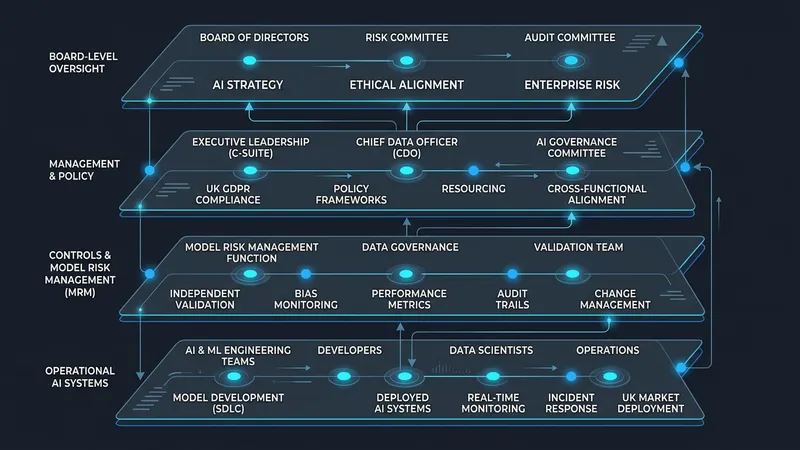

AI governance is the system of decision rights, accountability structures and operational processes that determine how an organisation develops, deploys and monitors AI systems. It answers three questions: who authorises AI use cases, what risks must be assessed before deployment, and how compliance is maintained as regulation evolves.

Defining AI Governance for Business

For UK businesses, AI governance is not a theoretical exercise. It is the operational infrastructure that enables AI adoption at pace whilst managing the risks that autonomous and semi-autonomous systems introduce. An AI governance approach for business defines scope (which AI systems are covered), roles (who is accountable), processes (how risks are assessed and decisions made) and controls (what safeguards are in place).

Governance also establishes escalation pathways. When an AI system produces an unexpected output, who is notified, what investigation is triggered, and how quickly must the response occur? Organisations with documented governance processes resolve AI-related incidents 50% faster than those relying on ad-hoc responses (OECD AI Policy Observatory, 2024). For organisations seeking a proportionate starting point, our AI governance guide for SMEs provides a structured template.

The Cost of Ungoverned AI

OECD data from 2024 shows that 78% of organisations deploying AI without formal governance structures encountered at least one significant compliance incident within 18 months. The consequences range from regulatory enforcement to reputational damage and operational setbacks.

The financial cost is quantifiable. ICO enforcement actions against organisations with inadequate AI oversight have increased year on year since 2023. Beyond regulatory penalties, ungoverned AI creates hidden costs: duplicated tools across departments, inconsistent data handling practices, and staff uncertainty about acceptable AI use.

PwC estimates that AI could contribute £550 billion to UK GDP by 2035 (opens in a new tab), but only if organisations deploy it responsibly. Governance is the mechanism that converts AI's economic potential into realised value.

Understanding what governance is creates the foundation. The next step is building a structured framework that translates that understanding into operational controls and measurable outcomes.

What Should an AI Governance Framework Include?

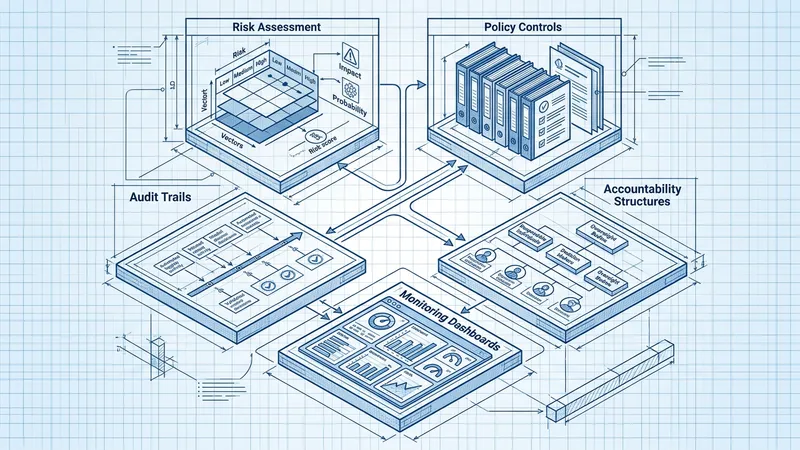

An AI governance framework provides the structural scaffolding that converts principles into operational practice. Without one, governance remains aspirational. With one, it becomes auditable, repeatable and scalable across business units.

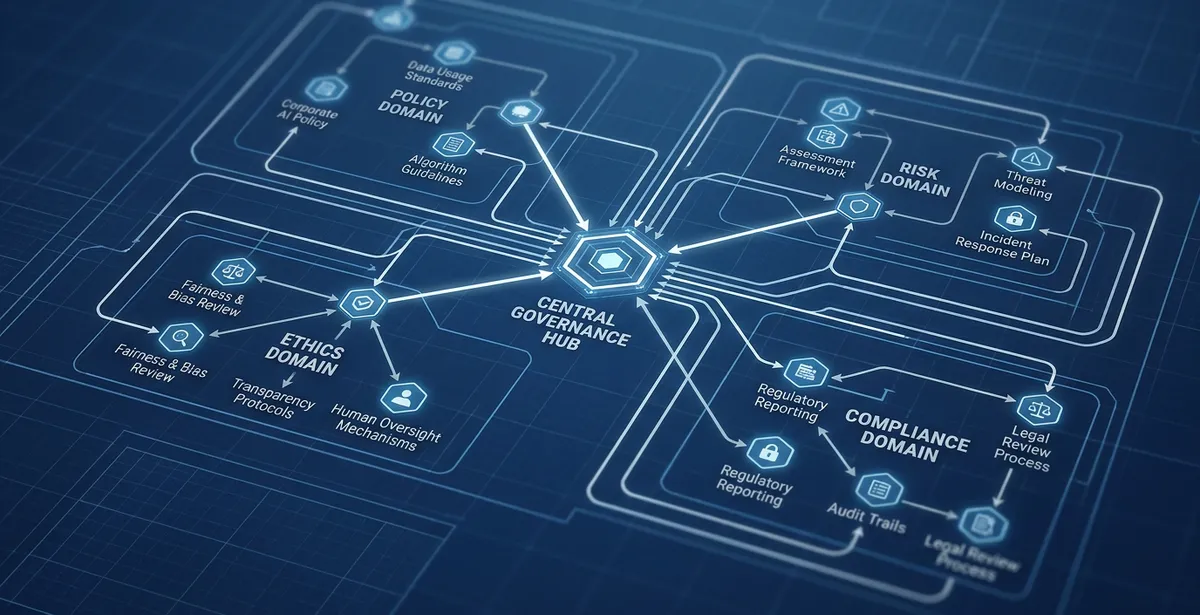

Core Components of a Governance Framework

Three frameworks dominate the current landscape, each with distinct strengths. The NIST AI Risk Management Framework (AI RMF 1.0) organises governance around four functions - Govern, Map, Measure, Manage - and is widely adopted by multinational organisations. ISO/IEC 42001:2023 provides a certifiable AI management system standard, offering a pathway to third-party verification. The EU AI Act takes a regulatory approach, classifying AI systems into four risk tiers and imposing mandatory requirements on high-risk systems.

An effective AI governance framework typically draws from multiple sources rather than adopting one in isolation. UK organisations often adopt NIST's flexible structure as a foundation, overlay ISO 42001's audit requirements for board-level assurance, and map their high-risk systems against the EU AI Act's classification tiers. Six core components recur across all credible frameworks: an AI system inventory, a risk classification methodology, documented decision rights, an oversight committee with defined authority, an audit trail mechanism, and a continuous monitoring process.

A Practical AI Governance Checklist

Use this AI governance checklist as a starting point for your organisation. It covers the minimum viable governance structure that UK businesses should have in place before scaling AI deployment.

- AI system inventory - document every AI tool in use, its purpose, the data it processes, and the team responsible for it

- Risk classification - categorise each AI system by risk level (using NIST, ISO or EU AI Act tiers)

- Acceptable use policy - define what AI tools staff can use, under what conditions, and with what data

- Oversight committee - establish a governance committee (even three people) with defined decision rights

- Incident response process - document what happens when an AI system produces harmful, biased or inaccurate outputs

- Review cadence - set a quarterly review cycle for all high-risk AI systems, annually for low-risk

Organisations that treat governance as an enabler, providing clear guardrails within which teams move quickly, report 35% faster AI deployment cycles than those with ad-hoc approval processes (MIT Sloan Management Review). For teams ready to translate this checklist into a full governance programme, our AI governance and risk services provide the methodology and templates to accelerate implementation.

A governance framework gives your organisation structure. But it must sit within the broader landscape of UK AI regulation that sets the baseline expectations for responsible AI use.

How Does UK AI Regulation Shape Your AI Policy?

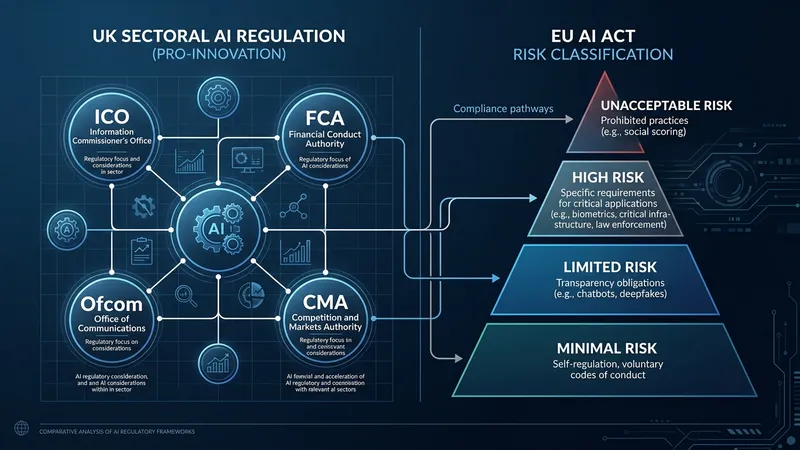

UK organisations face a dual regulatory reality: the domestic pro-innovation approach and the EU AI Act's extraterritorial requirements. Understanding both is essential for calibrating your governance framework to current and anticipated obligations.

The UK Regulatory Landscape for AI

The UK has adopted a principles-based, sector-specific approach to AI regulation rather than creating a single cross-sector AI law. The DSIT AI Regulation white paper (opens in a new tab) established five cross-cutting principles which existing sectoral regulators (FCA, ICO, Ofcom, CMA, MHRA) are expected to interpret and enforce within their domains.

This decentralised approach means that how UK AI regulation affects your organisation depends on your sector. A financial services firm's AI policy must satisfy FCA expectations around model risk management. A healthcare provider's AI systems must meet MHRA requirements for software as a medical device. An organisation processing personal data must comply with the ICO's AI and data protection guidance.

The EU AI Act introduces a complementary layer. If your AI systems affect EU residents, if you offer services to EU customers, or if AI outputs influence decisions about people in the EU, the Act's requirements apply. Non-compliance penalties reach up to EUR 35 million or 7% of global annual turnover for prohibited AI practices.

Building an AI Policy for Your Organisation

An effective AI policy for organisations translates regulatory requirements into internal standards that your teams can follow. Structure your policy in three layers. The first layer states principles: a concise declaration of values aligned to DSIT's five principles. The second layer defines standards: measurable requirements for bias testing, explainability, data retention and incident reporting. The third layer specifies procedures: step-by-step processes for AI impact assessments, approval workflows and escalation pathways.

Map every AI system to its relevant UK sectoral regulator. Subscribe to regulatory update feeds from the ICO, your sector regulator, and the AI Safety Institute. If your organisation needs structured support translating regulation into operational governance, AI consultancy for governance design helps bridge the gap between compliance requirements and practical policy.

Regulation sets the compliance floor. But leading organisations go further by embedding responsible AI principles into their governance model, creating competitive advantage through trust and transparency.

How Do UK Organisations Build Responsible AI Practices?

Responsible AI in the UK moves governance from compliance (the minimum required) to commitment (the standard you choose). Organisations that embed responsible AI principles into daily operations build measurable trust with customers, regulators and employees.

Responsible AI Principles in Practice

Five principles form the widely accepted foundation for responsible AI in UK organisational contexts. Fairness requires that AI systems do not systematically disadvantage individuals based on protected characteristics. Transparency demands that organisations can explain how their AI systems reach conclusions. Accountability establishes clear ownership: every AI system must have a named individual responsible for its governance. Privacy ensures compliance with UK GDPR and the Data Protection Act 2018. Safety mandates that AI systems are tested for reliability before deployment.

AI Bias in Culturally Sensitive Contexts

Fairness demands particular care when AI is applied in culturally or religiously sensitive contexts. Language models can misinterpret religious texts, flatten cultural nuance, or reflect training data biases that disadvantage minority communities. As Craig Hartzel has noted, “you've got to be very careful with bias” — especially when AI is used to interpret Torah, support community engagement in mosques and churches, or analyse sentiment in diverse cultural settings. Human oversight remains essential in these domains, and governance frameworks should explicitly address the risks of cultural bias alongside broader fairness requirements. Organisations working with faith and religious communities should build additional safeguards into their AI policies.

These principles apply proportionately. A 10-person charity using a single AI tool for donor analysis needs lighter governance than a multinational bank running 200 AI models. The Alan Turing Institute's responsible AI research programme (opens in a new tab) provides frameworks that organisations of any size can adapt.

Measuring Governance Maturity

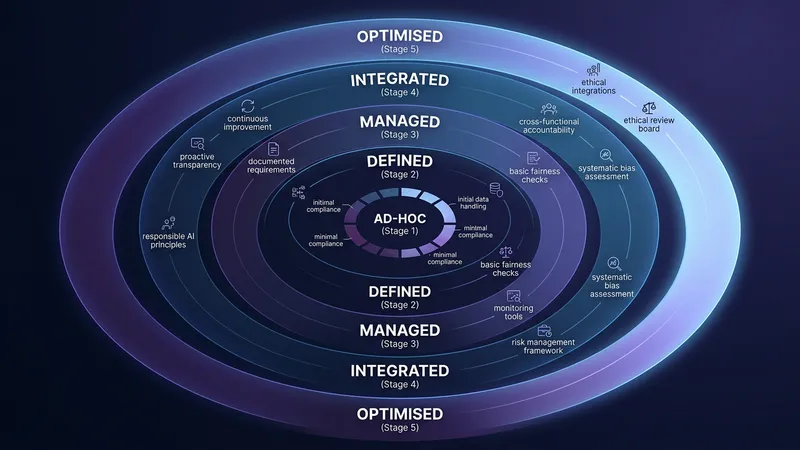

Governance maturity models help organisations benchmark their current state and plan a realistic improvement trajectory. A five-stage model works well in practice: Ad-hoc (no formal governance), Aware (policies drafted but not enforced), Defined (governance processes documented and assigned), Managed (governance integrated into AI deployment workflows), and Optimised (continuous improvement with measurable KPIs).

Most UK organisations currently sit between Aware and Defined. Moving from Defined to Managed typically requires dedicated governance roles, automated monitoring tools and regular board reporting on AI risk metrics. The investment pays off: organisations at the Managed stage or above report 40% fewer data-related complaints and adapt to regulatory change measurably faster.

For teams responsible for operationalising governance and responsible AI practices, AI training for governance teams provides the practical knowledge to move up the maturity curve with confidence.

Explore Further

- AI governance and risk services: structured support for framework implementation

- AI training for governance teams: build competence to operationalise governance policies

- AI consultancy: strategic guidance on governance architecture and compliance readiness

- AI governance guide for SMEs: proportionate governance for smaller organisations

Common questions

Frequently Asked Questions

Build your AI governance framework

Whether you are establishing a first-generation governance framework or refining an existing model, structured methodology accelerates outcomes. Hartz AI helps UK organisations build governance that works, from AI risk assessment through policy design to regulatory compliance.